This is the multi-page printable view of this section. Click here to print.

Tasks

- 1: Quickstart

- 2: Traffic

- 2.1: Backend Routing

- 2.2: Circuit Breakers

- 2.3: Client Traffic Policy

- 2.4: Connection Limit

- 2.5: Direct Response

- 2.6: Failover

- 2.7: Fault Injection

- 2.8: Gateway Address

- 2.9: Gateway API Support

- 2.10: Global Rate Limit

- 2.11: GRPC Routing

- 2.12: HTTP Redirects

- 2.13: HTTP Request Headers

- 2.14: HTTP Response Headers

- 2.15: HTTP Routing

- 2.16: HTTP Timeouts

- 2.17: HTTP URL Rewrite

- 2.18: HTTP3

- 2.19: HTTPRoute Request Mirroring

- 2.20: HTTPRoute Traffic Splitting

- 2.21: Load Balancing

- 2.22: Local Rate Limit

- 2.23: Multicluster Service Routing

- 2.24: Response Override

- 2.25: Retry

- 2.26: Routing outside Kubernetes

- 2.27: TCP Routing

- 2.28: UDP Routing

- 3: Security

- 3.1: Accelerating TLS Handshakes using Private Key Provider in Envoy

- 3.2: Backend Mutual TLS: Gateway to Backend

- 3.3: Backend TLS: Gateway to Backend

- 3.4: Basic Authentication

- 3.5: CORS

- 3.6: External Authorization

- 3.7: IP Allowlist/Denylist

- 3.8: JWT Authentication

- 3.9: JWT Claim-Based Authorization

- 3.10: Mutual TLS: External Clients to the Gateway

- 3.11: OIDC Authentication

- 3.12: Secure Gateways

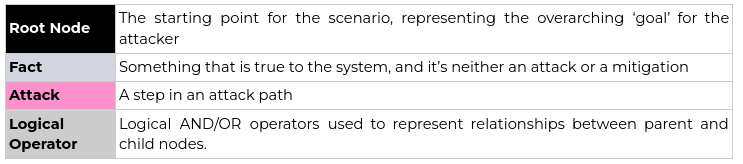

- 3.13: Threat Model

- 3.14: TLS Passthrough

- 3.15: TLS Termination for TCP

- 3.16: Using cert-manager For TLS Termination

- 4: Extensibility

- 4.1: Build a Wasm image

- 4.2: Envoy Patch Policy

- 4.3: Envoy Gateway Extension Server

- 4.4: External Processing

- 4.5: Wasm Extensions

- 5: Observability

- 5.1: Gateway API Metrics

- 5.2: Gateway Exported Metrics

- 5.3: Gateway Observability

- 5.4: Proxy Access Logs

- 5.5: Proxy Metrics

- 5.6: Proxy Tracing

- 5.7: RateLimit Observability

- 5.8: Visualising metrics using Grafana

- 6: Operations

- 6.1: Customize EnvoyProxy

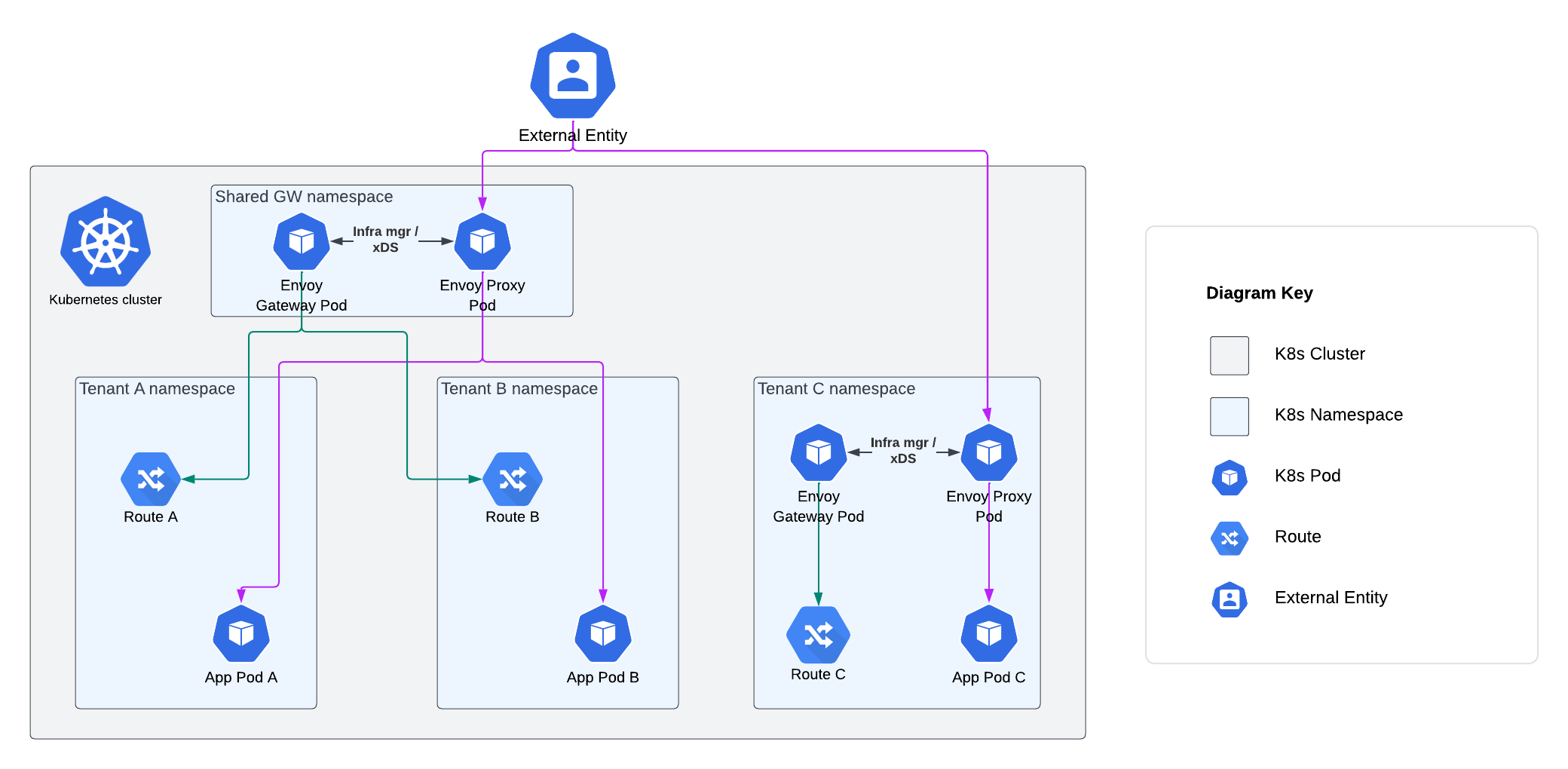

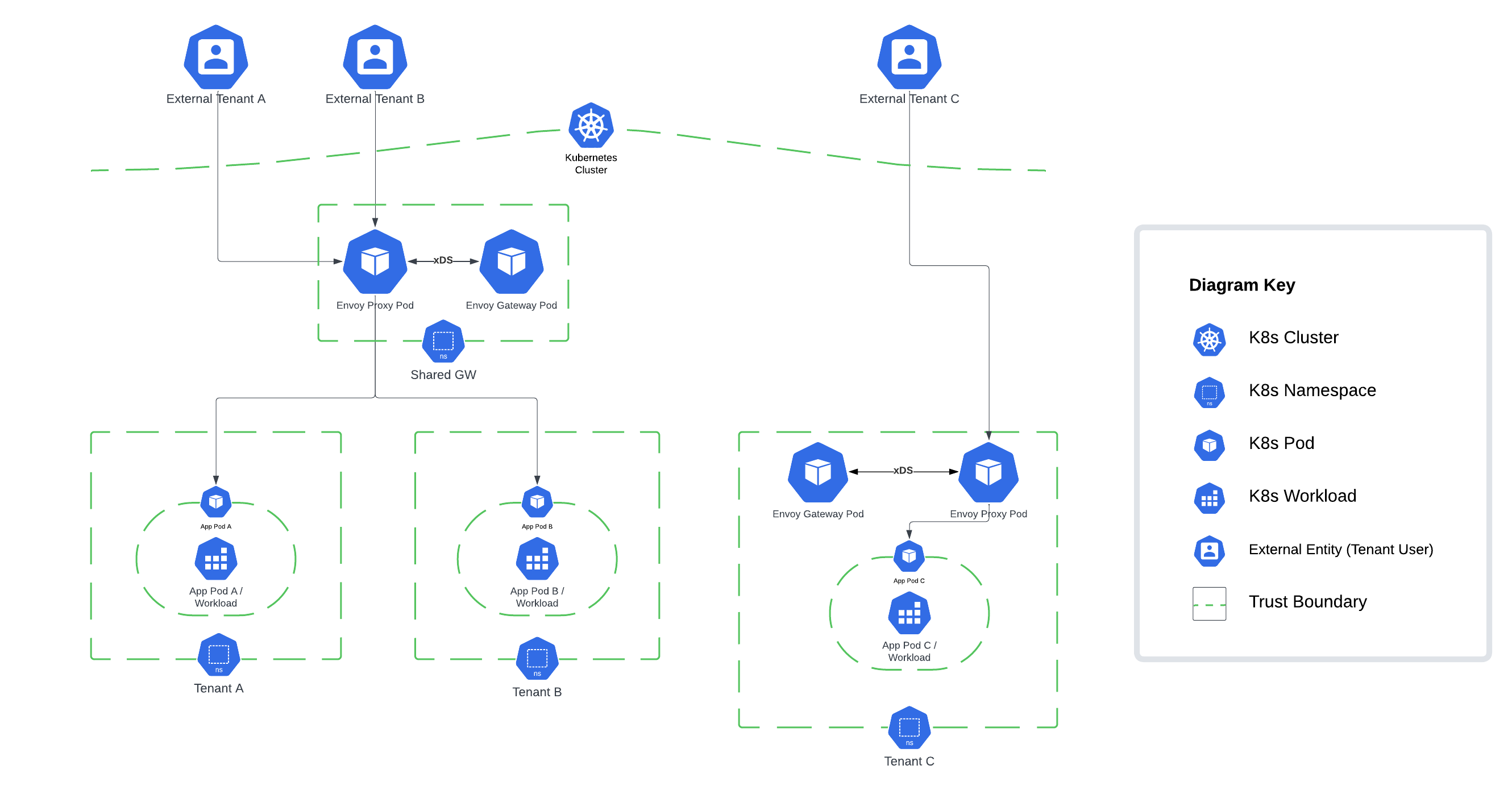

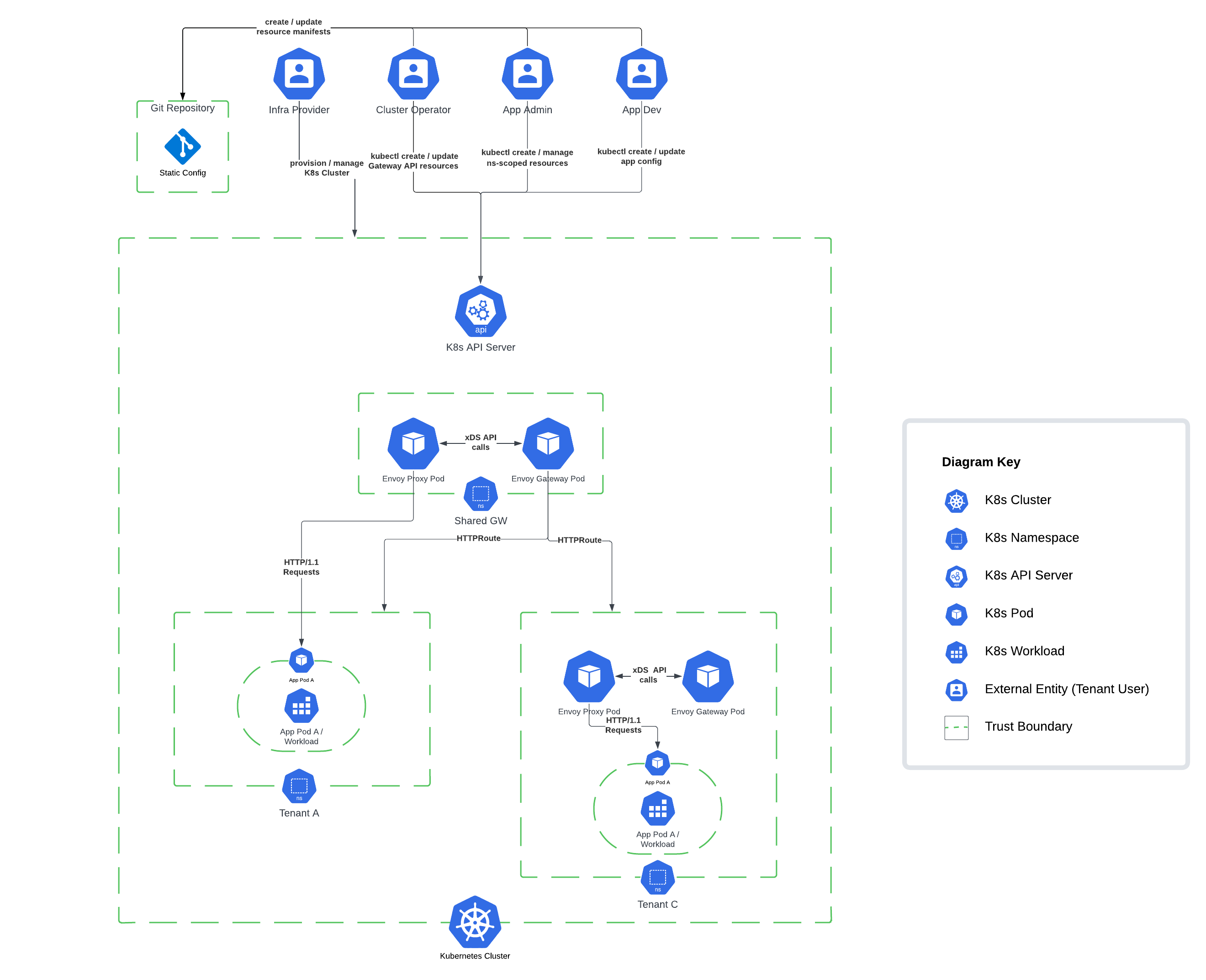

- 6.2: Deployment Mode

- 6.3: Standalone Deployment Mode

- 6.4: Use egctl

1 - Quickstart

This “quick start” will help you get started with Envoy Gateway in a few simple steps.

Prerequisites

A Kubernetes cluster.

Note: Refer to the Compatibility Matrix for supported Kubernetes versions.

Note: In case your Kubernetes cluster does not have a LoadBalancer implementation, we recommend installing one

so the Gateway resource has an Address associated with it. We recommend using MetalLB.

Note: For Mac user, you need install and run Docker Mac Net Connect to make the Docker network work.

Installation

Install the Gateway API CRDs and Envoy Gateway:

helm install eg oci://docker.io/envoyproxy/gateway-helm --version v1.2.6 -n envoy-gateway-system --create-namespace

Wait for Envoy Gateway to become available:

kubectl wait --timeout=5m -n envoy-gateway-system deployment/envoy-gateway --for=condition=Available

Install the GatewayClass, Gateway, HTTPRoute and example app:

kubectl apply -f https://github.com/envoyproxy/gateway/releases/download/v1.2.6/quickstart.yaml -n default

Note: quickstart.yaml defines that Envoy Gateway will listen for

traffic on port 80 on its globally-routable IP address, to make it easy to use

browsers to test Envoy Gateway. When Envoy Gateway sees that its Listener is

using a privileged port (<1024), it will map this internally to an

unprivileged port, so that Envoy Gateway doesn’t need additional privileges.

It’s important to be aware of this mapping, since you may need to take it into

consideration when debugging.

Testing the Configuration

You can also test the same functionality by sending traffic to the External IP. To get the external IP of the Envoy service, run:

export GATEWAY_HOST=$(kubectl get gateway/eg -o jsonpath='{.status.addresses[0].value}')

In certain environments, the load balancer may be exposed using a hostname, instead of an IP address. If so, replace

ip in the above command with hostname.

Curl the example app through Envoy proxy:

curl --verbose --header "Host: www.example.com" http://$GATEWAY_HOST/get

Get the name of the Envoy service created the by the example Gateway:

export ENVOY_SERVICE=$(kubectl get svc -n envoy-gateway-system --selector=gateway.envoyproxy.io/owning-gateway-namespace=default,gateway.envoyproxy.io/owning-gateway-name=eg -o jsonpath='{.items[0].metadata.name}')

Port forward to the Envoy service:

kubectl -n envoy-gateway-system port-forward service/${ENVOY_SERVICE} 8888:80 &

Curl the example app through Envoy proxy:

curl --verbose --header "Host: www.example.com" http://localhost:8888/get

What to explore next?

In this quickstart, you have:

- Installed Envoy Gateway

- Deployed a backend service, and a gateway

- Configured the gateway using Kubernetes Gateway API resources Gateway and HttpRoute to direct incoming requests over HTTP to the backend service.

Here is a suggested list of follow-on tasks to guide you in your exploration of Envoy Gateway:

Review the Tasks section for the scenario matching your use case. The Envoy Gateway tasks are organized by category: traffic management, security, extensibility, observability, and operations.

Clean-Up

Use the steps in this section to uninstall everything from the quickstart.

Delete the GatewayClass, Gateway, HTTPRoute and Example App:

kubectl delete -f https://github.com/envoyproxy/gateway/releases/download/v1.2.6/quickstart.yaml --ignore-not-found=true

Delete the Gateway API CRDs and Envoy Gateway:

helm uninstall eg -n envoy-gateway-system

Next Steps

Checkout the Developer Guide to get involved in the project.

2 - Traffic

2.1 - Backend Routing

Envoy Gateway supports routing to native K8s resources such as Service and ServiceImport. The Backend API is a custom Envoy Gateway extension resource that can used in Gateway-API BackendObjectReference.

Motivation

The Backend API was added to support several use cases:

- Allowing users to integrate Envoy with services (Ext Auth, Rate Limit, ALS, …) using Unix Domain Sockets, which are currently not supported by K8s.

- Simplify routing to cluster-external backends, which currently requires users to maintain both K8s

ServiceandEndpointSliceresources.

Warning

Similar to the K8s EndpointSlice API, the Backend API can be misused to allow traffic to be sent to otherwise restricted destinations, as described in CVE-2021-25740. A Backend resource can be used to:

- Expose a Service or Pod that should not be accessible

- Reference a Service or Pod by a Route without appropriate Reference Grants

- Expose the Envoy Proxy localhost (including the Envoy admin endpoint)

For these reasons, the Backend API is disabled by default in Envoy Gateway configuration. Envoy Gateway admins are advised to follow upstream recommendations and restrict access to the Backend API using K8s RBAC.

Restrictions

The Backend API is currently supported only in the following BackendReferences:

- HTTPRoute: IP and FQDN endpoints

- TLSRoute: IP and FQDN endpoints

- Envoy Extension Policy (ExtProc): IP, FQDN and unix domain socket endpoints

- Security Policy: IP and FQDN endpoints for the OIDC providers

The Backend API supports attachment the following policies:

Certain restrictions apply on the value of hostnames and addresses. For example, the loopback IP address range and the localhost hostname are forbidden.

Envoy Gateway does not manage the lifecycle of unix domain sockets referenced by the Backend resource. Envoy Gateway admins are responsible for creating and mounting the sockets into the envoy proxy pod. The latter can be achieved by patching the envoy deployment using the EnvoyProxy resource.

Quickstart

Prerequisites

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

Enable Backend

By default Backend is disabled. Lets enable it in the EnvoyGateway startup configuration

The default installation of Envoy Gateway installs a default EnvoyGateway configuration and attaches it using a

ConfigMap. In the next step, we will update this resource to enable Backend.

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: ConfigMap

metadata:

name: envoy-gateway-config

namespace: envoy-gateway-system

data:

envoy-gateway.yaml: |

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: EnvoyGateway

provider:

type: Kubernetes

gateway:

controllerName: gateway.envoyproxy.io/gatewayclass-controller

extensionApis:

enableBackend: true

EOF

Save and apply the following resource to your cluster:

---

apiVersion: v1

kind: ConfigMap

metadata:

name: envoy-gateway-config

namespace: envoy-gateway-system

data:

envoy-gateway.yaml: |

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: EnvoyGateway

provider:

type: Kubernetes

gateway:

controllerName: gateway.envoyproxy.io/gatewayclass-controller

extensionApis:

enableBackend: true

After updating the

ConfigMap, you will need to wait the configuration kicks in.

You can force the configuration to be reloaded by restarting theenvoy-gatewaydeployment.kubectl rollout restart deployment envoy-gateway -n envoy-gateway-system

Testing

Route to External Backend

- In the following example, we will create a

Backendresource that routes to httpbin.org:80 and aHTTPRoutethat references this backend.

cat <<EOF | kubectl apply -f -

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: backend

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- backendRefs:

- group: gateway.envoyproxy.io

kind: Backend

name: httpbin

matches:

- path:

type: PathPrefix

value: /

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: Backend

metadata:

name: httpbin

namespace: default

spec:

endpoints:

- fqdn:

hostname: httpbin.org

port: 80

EOF

Save and apply the following resources to your cluster:

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: backend

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- backendRefs:

- group: gateway.envoyproxy.io

kind: Backend

name: httpbin

matches:

- path:

type: PathPrefix

value: /

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: Backend

metadata:

name: httpbin

namespace: default

spec:

endpoints:

- fqdn:

hostname: httpbin.org

port: 80

Get the Gateway address:

export GATEWAY_HOST=$(kubectl get gateway/eg -o jsonpath='{.status.addresses[0].value}')

Send a request and view the response:

curl -I -HHost:www.example.com http://${GATEWAY_HOST}/headers

2.2 - Circuit Breakers

Envoy circuit breakers can be used to fail quickly and apply back-pressure in response to upstream service degradation.

Envoy Gateway supports the following circuit breaker thresholds:

- Concurrent Connections: limit the connections that Envoy can establish to the upstream service. When this threshold is met, new connections will not be established, and some requests will be queued until an existing connection becomes available.

- Concurrent Requests: limit on concurrent requests in-flight from Envoy to the upstream service. When this threshold is met, requests will be queued.

- Pending Requests: limit the pending request queue size. When this threshold is met, overflowing requests will be terminated with a

503status code.

Envoy’s circuit breakers are distributed: counters are not synchronized across different Envoy processes. The default Envoy and Envoy Gateway circuit breaker threshold values (1024) may be too strict for high-throughput systems.

Envoy Gateway introduces a new CRD called BackendTrafficPolicy that allows the user to describe their desired circuit breaker thresholds. This instantiated resource can be linked to a Gateway, HTTPRoute or GRPCRoute resource.

Note: There are distinct circuit breaker counters for each BackendReference in an xRoute rule. Even if a BackendTrafficPolicy targets a Gateway, each BackendReference in that gateway still has separate circuit breaker counter.

Prerequisites

Install Envoy Gateway

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

Install the hey load testing tool

- The

heyCLI will be used to generate load and measure response times. Follow the installation instruction from the Hey project docs.

Test and customize circuit breaker settings

This example will simulate a degraded backend that responds within 10 seconds by adding the ?delay=10s query parameter to API calls. The hey tool will be used to generate 100 concurrent requests.

hey -n 100 -c 100 -host "www.example.com" http://${GATEWAY_HOST}/?delay=10s

Summary:

Total: 10.3426 secs

Slowest: 10.3420 secs

Fastest: 10.0664 secs

Average: 10.2145 secs

Requests/sec: 9.6687

Total data: 36600 bytes

Size/request: 366 bytes

Response time histogram:

10.066 [1] |■■■■

10.094 [4] |■■■■■■■■■■■■■■■

10.122 [9] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.149 [10] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.177 [10] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.204 [11] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.232 [11] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.259 [11] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.287 [11] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.314 [11] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

10.342 [11] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

The default circuit breaker threshold (1024) is not met. As a result, requests do not overflow: all requests are proxied upstream and both Envoy and clients wait for 10s.

In order to fail fast, apply a BackendTrafficPolicy that limits concurrent requests to 10 and pending requests to 0.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: circuitbreaker-for-route

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: backend

circuitBreaker:

maxPendingRequests: 0

maxParallelRequests: 10

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: circuitbreaker-for-route

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: backend

circuitBreaker:

maxPendingRequests: 0

maxParallelRequests: 10

Execute the load simulation again.

hey -n 100 -c 100 -host "www.example.com" http://${GATEWAY_HOST}/?delay=10s

Summary:

Total: 10.1230 secs

Slowest: 10.1224 secs

Fastest: 0.0529 secs

Average: 1.0677 secs

Requests/sec: 9.8785

Total data: 10940 bytes

Size/request: 109 bytes

Response time histogram:

0.053 [1] |

1.060 [89] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

2.067 [0] |

3.074 [0] |

4.081 [0] |

5.088 [0] |

6.095 [0] |

7.102 [0] |

8.109 [0] |

9.115 [0] |

10.122 [10] |■■■■

With the new circuit breaker settings, and due to the slowness of the backend, only the first 10 concurrent requests were proxied, while the other 90 overflowed.

- Overflowing Requests failed fast, reducing proxy resource consumption.

- Upstream traffic was limited, alleviating the pressure on the degraded service.

2.3 - Client Traffic Policy

This task explains the usage of the ClientTrafficPolicy API.

Introduction

The ClientTrafficPolicy API allows system administrators to configure the behavior for how the Envoy Proxy server behaves with downstream clients.

Motivation

This API was added as a new policy attachment resource that can be applied to Gateway resources and it is meant to hold settings for configuring behavior of the connection between the downstream client and Envoy Proxy listener. It is the counterpart to the BackendTrafficPolicy API resource.

Quickstart

Prerequisites

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

Support TCP keepalive for downstream client

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: enable-tcp-keepalive-policy

namespace: default

spec:

targetRef:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

tcpKeepalive:

idleTime: 20m

interval: 60s

probes: 3

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: enable-tcp-keepalive-policy

namespace: default

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

tcpKeepalive:

idleTime: 20m

interval: 60s

probes: 3

Verify that ClientTrafficPolicy is Accepted:

kubectl get clienttrafficpolicies.gateway.envoyproxy.io -n default

You should see the policy marked as accepted like this:

NAME STATUS AGE

enable-tcp-keepalive-policy Accepted 5s

Curl the example app through Envoy proxy once again:

curl --verbose --header "Host: www.example.com" http://$GATEWAY_HOST/get --next --header "Host: www.example.com" http://$GATEWAY_HOST/get

You should see the output like this:

* Trying 172.18.255.202:80...

* Connected to 172.18.255.202 (172.18.255.202) port 80 (#0)

> GET /get HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.1.2

> Accept: */*

>

< HTTP/1.1 200 OK

< content-type: application/json

< x-content-type-options: nosniff

< date: Fri, 01 Dec 2023 10:17:04 GMT

< content-length: 507

< x-envoy-upstream-service-time: 0

< server: envoy

<

{

"path": "/get",

"host": "www.example.com",

"method": "GET",

"proto": "HTTP/1.1",

"headers": {

"Accept": [

"*/*"

],

"User-Agent": [

"curl/8.1.2"

],

"X-Envoy-Expected-Rq-Timeout-Ms": [

"15000"

],

"X-Envoy-Internal": [

"true"

],

"X-Forwarded-For": [

"172.18.0.2"

],

"X-Forwarded-Proto": [

"http"

],

"X-Request-Id": [

"4d0d33e8-d611-41f0-9da0-6458eec20fa5"

]

},

"namespace": "default",

"ingress": "",

"service": "",

"pod": "backend-58d58f745-2zwvn"

* Connection #0 to host 172.18.255.202 left intact

}* Found bundle for host: 0x7fb9f5204ea0 [serially]

* Can not multiplex, even if we wanted to

* Re-using existing connection #0 with host 172.18.255.202

> GET /headers HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.1.2

> Accept: */*

>

< HTTP/1.1 200 OK

< content-type: application/json

< x-content-type-options: nosniff

< date: Fri, 01 Dec 2023 10:17:04 GMT

< content-length: 511

< x-envoy-upstream-service-time: 0

< server: envoy

<

{

"path": "/headers",

"host": "www.example.com",

"method": "GET",

"proto": "HTTP/1.1",

"headers": {

"Accept": [

"*/*"

],

"User-Agent": [

"curl/8.1.2"

],

"X-Envoy-Expected-Rq-Timeout-Ms": [

"15000"

],

"X-Envoy-Internal": [

"true"

],

"X-Forwarded-For": [

"172.18.0.2"

],

"X-Forwarded-Proto": [

"http"

],

"X-Request-Id": [

"9a8874c0-c117-481c-9b04-933571732ca5"

]

},

"namespace": "default",

"ingress": "",

"service": "",

"pod": "backend-58d58f745-2zwvn"

* Connection #0 to host 172.18.255.202 left intact

}

You can see keepalive connection marked by the output in:

* Connection #0 to host 172.18.255.202 left intact

* Re-using existing connection #0 with host 172.18.255.202

Enable Proxy Protocol for downstream client

This example configures Proxy Protocol for downstream clients.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: enable-proxy-protocol-policy

namespace: default

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

enableProxyProtocol: true

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: enable-proxy-protocol-policy

namespace: default

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

enableProxyProtocol: true

Verify that ClientTrafficPolicy is Accepted:

kubectl get clienttrafficpolicies.gateway.envoyproxy.io -n default

You should see the policy marked as accepted like this:

NAME STATUS AGE

enable-proxy-protocol-policy Accepted 5s

Try the endpoint without using PROXY protocol with curl:

curl -v --header "Host: www.example.com" http://$GATEWAY_HOST/get

* Trying 172.18.255.202:80...

* Connected to 172.18.255.202 (172.18.255.202) port 80 (#0)

> GET /get HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.1.2

> Accept: */*

>

* Recv failure: Connection reset by peer

* Closing connection 0

curl: (56) Recv failure: Connection reset by peer

Curl the example app through Envoy proxy once again, now sending HAProxy PROXY protocol header at the beginning of the connection with –haproxy-protocol flag:

curl --verbose --haproxy-protocol --header "Host: www.example.com" http://$GATEWAY_HOST/get

You should now expect 200 response status and also see that source IP was preserved in the X-Forwarded-For header.

* Trying 172.18.255.202:80...

* Connected to 172.18.255.202 (172.18.255.202) port 80 (#0)

> GET /get HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.1.2

> Accept: */*

>

< HTTP/1.1 200 OK

< content-type: application/json

< x-content-type-options: nosniff

< date: Mon, 04 Dec 2023 21:11:43 GMT

< content-length: 510

< x-envoy-upstream-service-time: 0

< server: envoy

<

{

"path": "/get",

"host": "www.example.com",

"method": "GET",

"proto": "HTTP/1.1",

"headers": {

"Accept": [

"*/*"

],

"User-Agent": [

"curl/8.1.2"

],

"X-Envoy-Expected-Rq-Timeout-Ms": [

"15000"

],

"X-Envoy-Internal": [

"true"

],

"X-Forwarded-For": [

"192.168.255.6"

],

"X-Forwarded-Proto": [

"http"

],

"X-Request-Id": [

"290e4b61-44b7-4e5c-a39c-0ec76784e897"

]

},

"namespace": "default",

"ingress": "",

"service": "",

"pod": "backend-58d58f745-2zwvn"

* Connection #0 to host 172.18.255.202 left intact

}

Configure Client IP Detection

This example configures the number of additional ingress proxy hops from the right side of XFF HTTP headers to trust when determining the origin client’s IP address and determines whether or not x-forwarded-proto headers will be trusted. Refer to https://www.envoyproxy.io/docs/envoy/latest/configuration/http/http_conn_man/headers#x-forwarded-for for details.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: http-client-ip-detection

namespace: default

spec:

targetRef:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

clientIPDetection:

xForwardedFor:

numTrustedHops: 2

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: http-client-ip-detection

namespace: default

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

clientIPDetection:

xForwardedFor:

numTrustedHops: 2

Verify that ClientTrafficPolicy is Accepted:

kubectl get clienttrafficpolicies.gateway.envoyproxy.io -n default

You should see the policy marked as accepted like this:

NAME STATUS AGE

http-client-ip-detection Accepted 5s

Open port-forward to the admin interface port:

kubectl port-forward deploy/${ENVOY_DEPLOYMENT} -n envoy-gateway-system 19000:19000

Curl the admin interface port to fetch the configured value for xff_num_trusted_hops:

curl -s 'http://localhost:19000/config_dump?resource=dynamic_listeners' \

| jq -r '.configs[0].active_state.listener.default_filter_chain.filters[0].typed_config

| with_entries(select(.key | match("xff|remote_address|original_ip")))'

You should expect to see the following:

{

"use_remote_address": true,

"xff_num_trusted_hops": 2

}

Curl the example app through Envoy proxy:

curl -v http://$GATEWAY_HOST/get \

-H "Host: www.example.com" \

-H "X-Forwarded-Proto: https" \

-H "X-Forwarded-For: 1.1.1.1,2.2.2.2"

You should expect 200 response status, see that X-Forwarded-Proto was preserved and X-Envoy-External-Address was set to the leftmost address in the X-Forwarded-For header:

* Trying [::1]:8888...

* Connected to localhost (::1) port 8888

> GET /get HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.4.0

> Accept: */*

> X-Forwarded-Proto: https

> X-Forwarded-For: 1.1.1.1,2.2.2.2

>

Handling connection for 8888

< HTTP/1.1 200 OK

< content-type: application/json

< x-content-type-options: nosniff

< date: Tue, 30 Jan 2024 15:19:22 GMT

< content-length: 535

< x-envoy-upstream-service-time: 0

< server: envoy

<

{

"path": "/get",

"host": "www.example.com",

"method": "GET",

"proto": "HTTP/1.1",

"headers": {

"Accept": [

"*/*"

],

"User-Agent": [

"curl/8.4.0"

],

"X-Envoy-Expected-Rq-Timeout-Ms": [

"15000"

],

"X-Envoy-External-Address": [

"1.1.1.1"

],

"X-Forwarded-For": [

"1.1.1.1,2.2.2.2,10.244.0.9"

],

"X-Forwarded-Proto": [

"https"

],

"X-Request-Id": [

"53ccfad7-1899-40fa-9322-ddb833aa1ac3"

]

},

"namespace": "default",

"ingress": "",

"service": "",

"pod": "backend-58d58f745-8psnc"

* Connection #0 to host localhost left intact

}

Enable HTTP Request Received Timeout

This feature allows you to limit the time taken by the Envoy Proxy fleet to receive the entire request from the client, which is useful in preventing certain clients from consuming too much memory in Envoy This example configures the HTTP request timeout for the client, please check out the details here.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: client-timeout

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

timeout:

http:

requestReceivedTimeout: 2s

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: client-timeout

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

timeout:

http:

requestReceivedTimeout: 2s

Curl the example app through Envoy proxy:

curl -v http://$GATEWAY_HOST/get \

-H "Host: www.example.com" \

-H "Content-Length: 10000"

You should expect 428 response status after 2s:

curl -v http://$GATEWAY_HOST/get \

-H "Host: www.example.com" \

-H "Content-Length: 10000"

* Trying 172.18.255.200:80...

* Connected to 172.18.255.200 (172.18.255.200) port 80

> GET /get HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.4.0

> Accept: */*

> Content-Length: 10000

>

< HTTP/1.1 408 Request Timeout

< content-length: 15

< content-type: text/plain

< date: Tue, 27 Feb 2024 07:38:27 GMT

< connection: close

<

* Closing connection

request timeout

Configure Client HTTP Idle Timeout

The idle timeout is defined as the period in which there are no active requests. When the idle timeout is reached the connection will be closed. For more details see here.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: client-timeout

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

timeout:

http:

idleTimeout: 5s

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: client-timeout

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

timeout:

http:

idleTimeout: 5s

Curl the example app through Envoy proxy:

openssl s_client -crlf -connect $GATEWAY_HOST:443

You should expect the connection to be closed after 5s.

You can also check the number of connections closed due to idle timeout by using the following query:

envoy_http_downstream_cx_idle_timeout{envoy_http_conn_manager_prefix="<name of connection manager>"}

The number of connections closed due to idle timeout should be increased by 1.

Configure Downstream Per Connection Buffer Limit

This feature allows you to set a soft limit on size of the listener’s new connection read and write buffers.

The size is configured using the resource.Quantity format, see examples here.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: client-timeout

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

connection:

bufferLimit: 1024

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: client-timeout

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

connection:

bufferLimit: 1024

2.4 - Connection Limit

The connection limit features allows users to limit the number of concurrently active TCP connections on a Gateway or a Listener. When the connection limit is reached, new connections are closed immediately by Envoy proxy. It’s possible to configure a delay for connection rejection.

Users may want to limit the number of connections for several reasons:

- Protect resources like CPU and Memory.

- Ensure that different listeners can receive a fair share of global resources.

- Protect from malicious activity like DoS attacks.

Envoy Gateway introduces a new CRD called Client Traffic Policy that allows the user to describe their desired connection limit settings. This instantiated resource can be linked to a Gateway.

The Envoy connection limit implementation is distributed: counters are not synchronized between different envoy proxies.

When a Client Traffic Policy is attached to a gateway, the connection limit will apply differently based on the Listener protocol in use:

- HTTP: all HTTP listeners in a Gateway will share a common connection counter, and a limit defined by the policy.

- HTTPS/TLS: each HTTPS/TLS listener will have a dedicated connection counter, and a limit defined by the policy.

Prerequisites

Install Envoy Gateway

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

Install the hey load testing tool

- The

heyCLI will be used to generate load and measure response times. Follow the installation instruction from the Hey project docs.

Test and customize connection limit settings

This example we use hey to open 10 connections and execute 1 RPS per connection for 10 seconds.

hey -c 10 -q 1 -z 10s -host "www.example.com" http://${GATEWAY_HOST}/get

Summary:

Total: 10.0058 secs

Slowest: 0.0275 secs

Fastest: 0.0029 secs

Average: 0.0111 secs

Requests/sec: 9.9942

[...]

Status code distribution:

[200] 100 responses

There are no connection limits, and so all 100 requests succeed.

Next, we apply a limit of 5 connections.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: connection-limit-ctp

namespace: default

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

connection:

connectionLimit:

value: 5

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

name: connection-limit-ctp

namespace: default

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

connection:

connectionLimit:

value: 5

Execute the load simulation again.

hey -c 10 -q 1 -z 10s -host "www.example.com" http://${GATEWAY_HOST}/get

Summary:

Total: 11.0327 secs

Slowest: 0.0361 secs

Fastest: 0.0013 secs

Average: 0.0088 secs

Requests/sec: 9.0640

[...]

Status code distribution:

[200] 50 responses

Error distribution:

[50] Get "http://localhost:8888/get": EOF

With the new connection limit, only 5 of 10 connections are established, and so only 50 requests succeed.

2.5 - Direct Response

Direct responses are valuable in cases where you want the gateway itself to handle certain requests without forwarding them to backend services. This task shows you how to configure them.

Installation

Follow the steps from the Quickstart to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Testing Direct Response

cat <<EOF | kubectl apply -f -

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: direct-response

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- matches:

- path:

type: PathPrefix

value: /inline

filters:

- type: ExtensionRef

extensionRef:

group: gateway.envoyproxy.io

kind: HTTPRouteFilter

name: direct-response-inline

- matches:

- path:

type: PathPrefix

value: /value-ref

filters:

- type: ExtensionRef

extensionRef:

group: gateway.envoyproxy.io

kind: HTTPRouteFilter

name: direct-response-value-ref

---

apiVersion: v1

kind: ConfigMap

metadata:

name: value-ref-response

data:

response.body: '{"error": "Internal Server Error"}'

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: HTTPRouteFilter

metadata:

name: direct-response-inline

spec:

directResponse:

contentType: text/plain

statusCode: 503

body:

type: Inline

inline: "Oops! Your request is not found."

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: HTTPRouteFilter

metadata:

name: direct-response-value-ref

spec:

directResponse:

contentType: application/json

statusCode: 500

body:

type: ValueRef

valueRef:

group: ""

kind: ConfigMap

name: value-ref-response

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: direct-response

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- matches:

- path:

type: PathPrefix

value: /inline

filters:

- type: ExtensionRef

extensionRef:

group: gateway.envoyproxy.io

kind: HTTPRouteFilter

name: direct-response-inline

- matches:

- path:

type: PathPrefix

value: /value-ref

filters:

- type: ExtensionRef

extensionRef:

group: gateway.envoyproxy.io

kind: HTTPRouteFilter

name: direct-response-value-ref

---

apiVersion: v1

kind: ConfigMap

metadata:

name: value-ref-response

data:

response.body: '{"error": "Internal Server Error"}'

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: HTTPRouteFilter

metadata:

name: direct-response-inline

spec:

directResponse:

contentType: text/plain

statusCode: 503

body:

type: Inline

inline: "Oops! Your request is not found."

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: HTTPRouteFilter

metadata:

name: direct-response-value-ref

spec:

directResponse:

contentType: application/json

statusCode: 500

body:

type: ValueRef

valueRef:

group: ""

kind: ConfigMap

name: value-ref-response

curl --verbose --header "Host: www.example.com" http://$GATEWAY_HOST/inline

* Trying 127.0.0.1:80...

* Connected to 127.0.0.1 (127.0.0.1) port 80

> GET /inline HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.4.0

> Accept: */*

>

< HTTP/1.1 503 Service Unavailable

< content-type: text/plain

< content-length: 32

< date: Sat, 02 Nov 2024 00:35:48 GMT

<

* Connection #0 to host 127.0.0.1 left intact

Oops! Your request is not found.

curl --verbose --header "Host: www.example.com" http://$GATEWAY_HOST/value-ref

* Trying 127.0.0.1:80...

* Connected to 127.0.0.1 (127.0.0.1) port 80

> GET /value-ref HTTP/1.1

> Host: www.example.com

> User-Agent: curl/8.4.0

> Accept: */*

>

< HTTP/1.1 500 Internal Server Error

< content-type: application/json

< content-length: 34

< date: Sat, 02 Nov 2024 00:35:55 GMT

<

* Connection #0 to host 127.0.0.1 left intact

{"error": "Internal Server Error"}

2.6 - Failover

Active-passive failover in an API gateway setup is like having a backup plan in place to keep things running smoothly if something goes wrong. Here’s why it’s valuable:

Staying Online: When the main (or “active”) backend has issues or goes offline, the fallback (or “passive”) backend is ready to step in instantly. This helps keep your API accessible and your services running, so users don’t even notice any interruptions.

Automatic Switch Over: If a problem occurs, the system can automatically switch traffic over to the fallback backend. This avoids needing someone to jump in and fix things manually, which could take time and might even lead to mistakes.

Lower Costs: In an active-passive setup, the fallback backend doesn’t need to work all the time—it’s just on standby. This can save on costs (like cloud egress costs) compared to setups where both backend are running at full capacity.

Peace of Mind with Redundancy: Although the fallback backend isn’t handling traffic daily, it’s there as a safety net. If something happens with the primary backend, the backup can take over immediately, ensuring your service doesn’t skip a beat.

Prerequisites

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

Test

- We’ll first create two services & deployments, called

activeandpassive, representing anactiveandpassivebackend application.

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Service

metadata:

name: active

labels:

app: active

service: active

spec:

ports:

- name: http

port: 3000

targetPort: 3000

selector:

app: active

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: active

spec:

replicas: 1

selector:

matchLabels:

app: active

version: v1

template:

metadata:

labels:

app: active

version: v1

spec:

containers:

- image: gcr.io/k8s-staging-gateway-api/echo-basic:v20231214-v1.0.0-140-gf544a46e

imagePullPolicy: IfNotPresent

name: active

ports:

- containerPort: 3000

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

---

apiVersion: v1

kind: Service

metadata:

name: passive

labels:

app: passive

service: passive

spec:

ports:

- name: http

port: 3000

targetPort: 3000

selector:

app: passive

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: passive

spec:

replicas: 1

selector:

matchLabels:

app: passive

version: v1

template:

metadata:

labels:

app: passive

version: v1

spec:

containers:

- image: gcr.io/k8s-staging-gateway-api/echo-basic:v20231214-v1.0.0-140-gf544a46e

imagePullPolicy: IfNotPresent

name: passive

ports:

- containerPort: 3000

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

EOF

Save and apply the following resource to your cluster:

apiVersion: v1

kind: Service

metadata:

name: active

labels:

app: active

service: active

spec:

ports:

- name: http

port: 3000

targetPort: 3000

selector:

app: active

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: active

spec:

replicas: 1

selector:

matchLabels:

app: active

version: v1

template:

metadata:

labels:

app: active

version: v1

spec:

containers:

- image: gcr.io/k8s-staging-gateway-api/echo-basic:v20231214-v1.0.0-140-gf544a46e

imagePullPolicy: IfNotPresent

name: active

ports:

- containerPort: 3000

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

---

apiVersion: v1

kind: Service

metadata:

name: passive

labels:

app: passive

service: passive

spec:

ports:

- name: http

port: 3000

targetPort: 3000

selector:

app: passive

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: passive

spec:

replicas: 1

selector:

matchLabels:

app: passive

version: v1

template:

metadata:

labels:

app: passive

version: v1

spec:

containers:

- image: gcr.io/k8s-staging-gateway-api/echo-basic:v20231214-v1.0.0-140-gf544a46e

imagePullPolicy: IfNotPresent

name: passive

ports:

- containerPort: 3000

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

Follow the instructions here to enable the Backend API

Create two Backend resources that are used to represent the

activebackend andpassivebackend. Note, we’ve setfallback: truefor thepassivebackend to indicate its a passive backend

cat <<EOF | kubectl apply -f -

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: Backend

metadata:

name: passive

spec:

fallback: true

endpoints:

- fqdn:

hostname: passive.default.svc.cluster.local

port: 3000

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: Backend

metadata:

name: active

spec:

endpoints:

- fqdn:

hostname: active.default.svc.cluster.local

port: 3000

---

EOF

Save and apply the following resources to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: Backend

metadata:

name: passive

spec:

fallback: true

endpoints:

- fqdn:

hostname: passive.default.svc.cluster.local

port: 3000

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: Backend

metadata:

name: active

spec:

endpoints:

- fqdn:

hostname: active.default.svc.cluster.local

port: 3000

---

- Lets create an HTTPRoute that can route to both these backends

cat <<EOF | kubectl apply -f -

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: ha-example

namespace: default

spec:

hostnames:

- www.example.com

parentRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

namespace: default

rules:

- backendRefs:

- group: gateway.envoyproxy.io

kind: Backend

name: active

namespace: default

port: 3000

- group: gateway.envoyproxy.io

kind: Backend

name: passive

namespace: default

port: 3000

matches:

- path:

type: PathPrefix

value: /test

EOF

Save and apply the following resources to your cluster:

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: ha-example

namespace: default

spec:

hostnames:

- www.example.com

parentRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: eg

namespace: default

rules:

- backendRefs:

- group: gateway.envoyproxy.io

kind: Backend

name: active

namespace: default

port: 3000

- group: gateway.envoyproxy.io

kind: Backend

name: passive

namespace: default

port: 3000

matches:

- path:

type: PathPrefix

value: /test

- Lets configure a

BackendTrafficPolicywith a passive health check setting to detect an transient errors.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: passive-health-check

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: ha-example

healthCheck:

passive:

baseEjectionTime: 10s

interval: 2s

maxEjectionPercent: 100

consecutive5XxErrors: 1

consecutiveGatewayErrors: 0

consecutiveLocalOriginFailures: 1

splitExternalLocalOriginErrors: false

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: passive-health-check

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: ha-example

healthCheck:

passive:

baseEjectionTime: 10s

interval: 2s

maxEjectionPercent: 100

consecutive5XxErrors: 1

consecutiveGatewayErrors: 0

consecutiveLocalOriginFailures: 1

splitExternalLocalOriginErrors: false

- Lets send 10 requests. You should see that they all go to the

activebackend.

for i in {1..10; do curl --verbose --header "Host: www.example.com" http://$GATEWAY_HOST/test 2>/dev/null | jq .pod; done

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

"active-5bb896774f-lz8s9"

- Lets simulate a failure in the

activebackend by changing the server listening port to5000

cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: active

spec:

replicas: 1

selector:

matchLabels:

app: active

version: v1

template:

metadata:

labels:

app: active

version: v1

spec:

containers:

- image: gcr.io/k8s-staging-gateway-api/echo-basic:v20231214-v1.0.0-140-gf544a46e

imagePullPolicy: IfNotPresent

name: active

ports:

- containerPort: 3000

env:

- name: HTTP_PORT

value: "5000"

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

EOF

Save and apply the following resource to your cluster:

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: active

spec:

replicas: 1

selector:

matchLabels:

app: active

version: v1

template:

metadata:

labels:

app: active

version: v1

spec:

containers:

- image: gcr.io/k8s-staging-gateway-api/echo-basic:v20231214-v1.0.0-140-gf544a46e

imagePullPolicy: IfNotPresent

name: active

ports:

- containerPort: 3000

env:

- name: HTTP_PORT

value: "5000"

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- Lets send 10 requests again. You should see them all being sent to the

passivebackend

for i in {1..10; do curl --verbose --header "Host: www.example.com" http://$GATEWAY_HOST/test 2>/dev/null | jq .pod; done

parse error: Invalid numeric literal at line 1, column 9

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

"passive-7ddbf945c9-wkc4f"

The first error can be avoided by configuring retries.

2.7 - Fault Injection

Envoy fault injection can be used to inject delays and abort requests to mimic failure scenarios such as service failures and overloads.

Envoy Gateway supports the following fault scenarios:

- delay fault: inject a custom fixed delay into the request with a certain probability to simulate delay failures.

- abort fault: inject a custom response code into the response with a certain probability to simulate abort failures.

Envoy Gateway introduces a new CRD called BackendTrafficPolicy that allows the user to describe their desired fault scenarios. This instantiated resource can be linked to a Gateway, HTTPRoute or GRPCRoute resource.

Prerequisites

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

For GRPC - follow the steps from the GRPC Routing example.

Install the hey load testing tool

- The

heyCLI will be used to generate load and measure response times. Follow the installation instruction from the Hey project docs.

Configuration

Allow requests with a valid faultInjection by creating an BackendTrafficPolicy and attaching it to the example HTTPRoute or GRPCRoute.

HTTPRoute

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: fault-injection-50-percent-abort

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: foo

faultInjection:

abort:

httpStatus: 501

percentage: 50

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: fault-injection-delay

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: bar

faultInjection:

delay:

fixedDelay: 2s

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: foo

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- backendRefs:

- group: ""

kind: Service

name: backend

port: 3000

weight: 1

matches:

- path:

type: PathPrefix

value: /foo

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: bar

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- backendRefs:

- group: ""

kind: Service

name: backend

port: 3000

weight: 1

matches:

- path:

type: PathPrefix

value: /bar

EOF

Save and apply the following resources to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: fault-injection-50-percent-abort

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: foo

faultInjection:

abort:

httpStatus: 501

percentage: 50

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: fault-injection-delay

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: bar

faultInjection:

delay:

fixedDelay: 2s

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: foo

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- backendRefs:

- group: ""

kind: Service

name: backend

port: 3000

weight: 1

matches:

- path:

type: PathPrefix

value: /foo

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: bar

spec:

parentRefs:

- name: eg

hostnames:

- "www.example.com"

rules:

- backendRefs:

- group: ""

kind: Service

name: backend

port: 3000

weight: 1

matches:

- path:

type: PathPrefix

value: /bar

Two HTTPRoute resources were created, one for /foo and another for /bar. fault-injection-abort BackendTrafficPolicy has been created and targeted HTTPRoute foo to abort requests for /foo. fault-injection-delay BackendTrafficPolicy has been created and targeted HTTPRoute foo to delay 2s requests for /bar.

Verify the HTTPRoute configuration and status:

kubectl get httproute/foo -o yaml

kubectl get httproute/bar -o yaml

Verify the BackendTrafficPolicy configuration:

kubectl get backendtrafficpolicy/fault-injection-50-percent-abort -o yaml

kubectl get backendtrafficpolicy/fault-injection-delay -o yaml

GRPCRoute

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: fault-injection-abort

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: GRPCRoute

name: yages

faultInjection:

abort:

grpcStatus: 14

---

apiVersion: gateway.networking.k8s.io/v1alpha2

kind: GRPCRoute

metadata:

name: yages

labels:

example: grpc-routing

spec:

parentRefs:

- name: example-gateway

hostnames:

- "grpc-example.com"

rules:

- backendRefs:

- group: ""

kind: Service

name: yages

port: 9000

weight: 1

EOF

Save and apply the following resources to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: fault-injection-abort

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: GRPCRoute

name: yages

faultInjection:

abort:

grpcStatus: 14

---

apiVersion: gateway.networking.k8s.io/v1alpha2

kind: GRPCRoute

metadata:

name: yages

labels:

example: grpc-routing

spec:

parentRefs:

- name: example-gateway

hostnames:

- "grpc-example.com"

rules:

- backendRefs:

- group: ""

kind: Service

name: yages

port: 9000

weight: 1

A BackendTrafficPolicy has been created and targeted GRPCRoute yages to abort requests for yages service..

Verify the GRPCRoute configuration and status:

kubectl get grpcroute/yages -o yaml

Verify the SecurityPolicy configuration:

kubectl get backendtrafficpolicy/fault-injection-abort -o yaml

Testing

Ensure the GATEWAY_HOST environment variable from the Quickstart is set. If not, follow the

Quickstart instructions to set the variable.

echo $GATEWAY_HOST

HTTPRoute

Verify that requests to foo route are aborted.

hey -n 1000 -c 100 -host "www.example.com" http://${GATEWAY_HOST}/foo

Status code distribution:

[200] 501 responses

[501] 499 responses

Verify that requests to bar route are delayed.

hey -n 1000 -c 100 -host "www.example.com" http://${GATEWAY_HOST}/bar

Summary:

Total: 20.1493 secs

Slowest: 2.1020 secs

Fastest: 1.9940 secs

Average: 2.0123 secs

Requests/sec: 49.6295

Total data: 557000 bytes

Size/request: 557 bytes

Response time histogram:

1.994 [1] |

2.005 [475] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

2.016 [419] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■

2.026 [5] |

2.037 [0] |

2.048 [0] |

2.059 [30] |■■■

2.070 [0] |

2.080 [0] |

2.091 [11] |■

2.102 [59] |■■■■■

GRPCRoute

Verify that requests to yagesservice are aborted.

grpcurl -plaintext -authority=grpc-example.com ${GATEWAY_HOST}:80 yages.Echo/Ping

You should see the below response

Error invoking method "yages.Echo/Ping": rpc error: code = Unavailable desc = failed to query for service descriptor "yages.Echo": fault filter abort

Clean-Up

Follow the steps from the Quickstart to uninstall Envoy Gateway and the example manifest.

Delete the BackendTrafficPolicy:

kubectl delete BackendTrafficPolicy/fault-injection-abort

2.8 - Gateway Address

The Gateway API provides an optional Addresses field through which Envoy Gateway can set addresses for Envoy Proxy Service. Depending on the Service Type, the addresses of gateway can be used as:

Prerequisites

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

External IPs

Using the addresses in Gateway.Spec.Addresses as the External IPs of Envoy Proxy Service,

this will require the address to be of type IPAddress and the ServiceType to be of LoadBalancer or NodePort.

The Envoy Gateway deploys Envoy Proxy Service as LoadBalancer by default,

so you can set the address of the Gateway directly (the address settings here are for reference only):

kubectl patch gateway eg --type=json --patch '

- op: add

path: /spec/addresses

value:

- type: IPAddress

value: 1.2.3.4

'

Verify the Gateway status:

kubectl get gateway

NAME CLASS ADDRESS PROGRAMMED AGE

eg eg 1.2.3.4 True 14m

Verify the Envoy Proxy Service status:

kubectl get service -n envoy-gateway-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

envoy-default-eg-64656661 LoadBalancer 10.96.236.219 1.2.3.4 80:31017/TCP 15m

envoy-gateway ClusterIP 10.96.192.76 <none> 18000/TCP 15m

envoy-gateway-metrics-service ClusterIP 10.96.124.73 <none> 8443/TCP 15m

Note: If the Gateway.Spec.Addresses is explicitly set, it will be the only addresses that populates the Gateway status.

Cluster IP

Using the addresses in Gateway.Spec.Addresses as the Cluster IP of Envoy Proxy Service,

this will require the address to be of type IPAddress and the ServiceType to be of ClusterIP.

2.9 - Gateway API Support

As mentioned in the system design document, Envoy Gateway’s managed data plane is configured dynamically through Kubernetes resources, primarily Gateway API objects. Envoy Gateway supports configuration using the following Gateway API resources.

GatewayClass

A GatewayClass represents a “class” of gateways, i.e. which Gateways should be managed by Envoy Gateway.

Envoy Gateway supports managing a single GatewayClass resource that matches its configured controllerName and

follows Gateway API guidelines for resolving conflicts when multiple GatewayClasses exist with a matching

controllerName.

Note: If specifying GatewayClass parameters reference, it must refer to an EnvoyProxy resource.

Gateway

When a Gateway resource is created that references the managed GatewayClass, Envoy Gateway will create and manage a new Envoy Proxy deployment. Gateway API resources that reference this Gateway will configure this managed Envoy Proxy deployment.

HTTPRoute

A HTTPRoute configures routing of HTTP traffic through one or more Gateways. The following HTTPRoute filters are supported by Envoy Gateway:

requestHeaderModifier: RequestHeaderModifiers can be used to modify or add request headers before the request is proxied to its destination.responseHeaderModifier: ResponseHeaderModifiers can be used to modify or add response headers before the response is sent back to the client.requestMirror: RequestMirrors configure destinations where the requests should also be mirrored to. Responses to mirrored requests will be ignored.requestRedirect: RequestRedirects configure policied for how requests that match the HTTPRoute should be modified and then redirected.urlRewrite: UrlRewrites allow for modification of the request’s hostname and path before it is proxied to its destination.extensionRef: ExtensionRefs are used by Envoy Gateway to implement extended filters. Currently, Envoy Gateway supports rate limiting and request authentication filters. For more information about these filters, refer to the rate limiting and request authentication documentation.

Notes:

- The only BackendRef kind supported by Envoy Gateway is a Service. Routing traffic to other destinations such as arbitrary URLs is not possible.

- Only

requestHeaderModifierandresponseHeaderModifierfilters are currently supported within HTTPBackendRef.

TCPRoute

A TCPRoute configures routing of raw TCP traffic through one or more Gateways. Traffic can be forwarded to the desired BackendRefs based on a TCP port number.

Note: A TCPRoute only supports proxying in non-transparent mode, i.e. the backend will see the source IP and port of the Envoy Proxy instance instead of the client.

UDPRoute

A UDPRoute configures routing of raw UDP traffic through one or more Gateways. Traffic can be forwarded to the desired BackendRefs based on a UDP port number.

Note: Similar to TCPRoutes, UDPRoutes only support proxying in non-transparent mode i.e. the backend will see the source IP and port of the Envoy Proxy instance instead of the client.

GRPCRoute

A GRPCRoute configures routing of gRPC requests through one or more Gateways. They offer request matching by hostname, gRPC service, gRPC method, or HTTP/2 Header. Envoy Gateway supports the following filters on GRPCRoutes to provide additional traffic processing:

requestHeaderModifier: RequestHeaderModifiers can be used to modify or add request headers before the request is proxied to its destination.responseHeaderModifier: ResponseHeaderModifiers can be used to modify or add response headers before the response is sent back to the client.requestMirror: RequestMirrors configure destinations where the requests should also be mirrored to. Responses to mirrored requests will be ignored.

Notes:

- The only BackendRef kind supported by Envoy Gateway is a Service. Routing traffic to other destinations such as arbitrary URLs is not currently possible.

- Only

requestHeaderModifierandresponseHeaderModifierfilters are currently supported within GRPCBackendRef.

TLSRoute

A TLSRoute configures routing of TCP traffic through one or more Gateways. However, unlike TCPRoutes, TLSRoutes can match against TLS-specific metadata.

ReferenceGrant

A ReferenceGrant is used to allow a resource to reference another resource in a different namespace. Normally an

HTTPRoute created in namespace foo is not allowed to reference a Service in namespace bar. A ReferenceGrant permits

these types of cross-namespace references. Envoy Gateway supports the following ReferenceGrant use-cases:

- Allowing an HTTPRoute, GRPCRoute, TLSRoute, UDPRoute, or TCPRoute to reference a Service in a different namespace.

- Allowing an HTTPRoute’s

requestMirrorfilter to include a BackendRef that references a Service in a different namespace. - Allowing a Gateway’s SecretObjectReference to reference a secret in a different namespace.

2.10 - Global Rate Limit

Rate limit is a feature that allows the user to limit the number of incoming requests to a predefined value based on attributes within the traffic flow.

Here are some reasons why you may want to implement Rate limits

- To prevent malicious activity such as DDoS attacks.

- To prevent applications and its resources (such as a database) from getting overloaded.

- To create API limits based on user entitlements.

Envoy Gateway supports two types of rate limiting: Global rate limiting and Local rate limiting.

Global rate limiting applies a shared rate limit to the traffic flowing through all the instances of Envoy proxies where it is configured. i.e. if the data plane has 2 replicas of Envoy running, and the rate limit is 10 requests/second, this limit is shared and will be hit if 5 requests pass through the first replica and 5 requests pass through the second replica within the same second.

Envoy Gateway introduces a new CRD called BackendTrafficPolicy that allows the user to describe their rate limit intent. This instantiated resource can be linked to a Gateway, HTTPRoute or GRPCRoute resource.

Note: Limit is applied per route. Even if a BackendTrafficPolicy targets a gateway, each route in that gateway still has a separate rate limit bucket. For example, if a gateway has 2 routes, and the limit is 100r/s, then each route has its own 100r/s rate limit bucket.

Prerequisites

Install Envoy Gateway

Follow the steps from the Quickstart task to install Envoy Gateway and the example manifest. Before proceeding, you should be able to query the example backend using HTTP.

Verify the Gateway status:

kubectl get gateway/eg -o yaml

egctl x status gateway -v

Install Redis

- The global rate limit feature is based on Envoy Ratelimit which requires a Redis instance as its caching layer.

Lets install a Redis deployment in the

redis-systemnamespce.

cat <<EOF | kubectl apply -f -

kind: Namespace

apiVersion: v1

metadata:

name: redis-system

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: redis

namespace: redis-system

labels:

app: redis

spec:

replicas: 1

selector:

matchLabels:

app: redis

template:

metadata:

labels:

app: redis

spec:

containers:

- image: redis:6.0.6

imagePullPolicy: IfNotPresent

name: redis

resources:

limits:

cpu: 1500m

memory: 512Mi

requests:

cpu: 200m

memory: 256Mi

---

apiVersion: v1

kind: Service

metadata:

name: redis

namespace: redis-system

labels:

app: redis

annotations:

spec:

ports:

- name: redis

port: 6379

protocol: TCP

targetPort: 6379

selector:

app: redis

EOF

Save and apply the following resources to your cluster:

---

kind: Namespace

apiVersion: v1

metadata:

name: redis-system

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: redis

namespace: redis-system

labels:

app: redis

spec:

replicas: 1

selector:

matchLabels:

app: redis

template:

metadata:

labels:

app: redis

spec:

containers:

- image: redis:6.0.6

imagePullPolicy: IfNotPresent

name: redis

resources:

limits:

cpu: 1500m

memory: 512Mi

requests:

cpu: 200m

memory: 256Mi

---

apiVersion: v1

kind: Service

metadata:

name: redis

namespace: redis-system

labels:

app: redis

annotations:

spec:

ports:

- name: redis

port: 6379

protocol: TCP

targetPort: 6379

selector:

app: redis

Enable Global Rate limit in Envoy Gateway

- The default installation of Envoy Gateway installs a default EnvoyGateway configuration and attaches it

using a

ConfigMap. In the next step, we will update this resource to enable rate limit in Envoy Gateway as well as configure the URL for the Redis instance used for Global rate limiting.

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: ConfigMap

metadata:

name: envoy-gateway-config

namespace: envoy-gateway-system

data:

envoy-gateway.yaml: |

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: EnvoyGateway

provider:

type: Kubernetes

gateway:

controllerName: gateway.envoyproxy.io/gatewayclass-controller

rateLimit:

backend:

type: Redis

redis:

url: redis.redis-system.svc.cluster.local:6379

EOF

Save and apply the following resource to your cluster:

---

apiVersion: v1

kind: ConfigMap

metadata:

name: envoy-gateway-config

namespace: envoy-gateway-system

data:

envoy-gateway.yaml: |

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: EnvoyGateway

provider:

type: Kubernetes

gateway:

controllerName: gateway.envoyproxy.io/gatewayclass-controller

rateLimit:

backend:

type: Redis

redis:

url: redis.redis-system.svc.cluster.local:6379

After updating the

ConfigMap, you will need to wait the configuration kicks in.

You can force the configuration to be reloaded by restarting theenvoy-gatewaydeployment.kubectl rollout restart deployment envoy-gateway -n envoy-gateway-system

Rate Limit Specific User

Here is an example of a rate limit implemented by the application developer to limit a specific user by matching on a custom x-user-id header

with a value set to one.

cat <<EOF | kubectl apply -f -

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: policy-httproute

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: http-ratelimit

rateLimit:

type: Global

global:

rules:

- clientSelectors:

- headers:

- name: x-user-id

value: one

limit:

requests: 3

unit: Hour

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: policy-httproute

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: http-ratelimit

rateLimit:

type: Global

global:

rules:

- clientSelectors:

- headers:

- name: x-user-id

value: one

limit:

requests: 3

unit: Hour

HTTPRoute

cat <<EOF | kubectl apply -f -

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: http-ratelimit

spec:

parentRefs:

- name: eg

hostnames:

- ratelimit.example

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- group: ""

kind: Service

name: backend

port: 3000

EOF

Save and apply the following resource to your cluster:

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: http-ratelimit

spec:

parentRefs:

- name: eg

hostnames:

- ratelimit.example

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- group: ""

kind: Service

name: backend

port: 3000

The HTTPRoute status should indicate that it has been accepted and is bound to the example Gateway.

kubectl get httproute/http-ratelimit -o yaml

Get the Gateway’s address:

export GATEWAY_HOST=$(kubectl get gateway/eg -o jsonpath='{.status.addresses[0].value}')

Let’s query ratelimit.example/get 4 times. We should receive a 200 response from the example Gateway for the first 3 requests

and then receive a 429 status code for the 4th request since the limit is set at 3 requests/Hour for the request which contains the header x-user-id

and value one.

for i in {1..4}; do curl -I --header "Host: ratelimit.example" --header "x-user-id: one" http://${GATEWAY_HOST}/get ; sleep 1; done

HTTP/1.1 200 OK

content-type: application/json

x-content-type-options: nosniff

date: Wed, 08 Feb 2023 02:33:31 GMT

content-length: 460

x-envoy-upstream-service-time: 4

server: envoy

HTTP/1.1 200 OK

content-type: application/json

x-content-type-options: nosniff

date: Wed, 08 Feb 2023 02:33:32 GMT

content-length: 460

x-envoy-upstream-service-time: 2

server: envoy

HTTP/1.1 200 OK

content-type: application/json

x-content-type-options: nosniff

date: Wed, 08 Feb 2023 02:33:33 GMT

content-length: 460

x-envoy-upstream-service-time: 0

server: envoy

HTTP/1.1 429 Too Many Requests

x-envoy-ratelimited: true

date: Wed, 08 Feb 2023 02:33:34 GMT

server: envoy

transfer-encoding: chunked

You should be able to send requests with the x-user-id header and a different value and receive successful responses from the server.

for i in {1..4}; do curl -I --header "Host: ratelimit.example" --header "x-user-id: two" http://${GATEWAY_HOST}/get ; sleep 1; done

HTTP/1.1 200 OK

content-type: application/json

x-content-type-options: nosniff

date: Wed, 08 Feb 2023 02:34:36 GMT

content-length: 460